爬虫京东图书实现分布式爬虫scrapy_redis(详)

本文共 5147 字,大约阅读时间需要 17 分钟。

京东图书实现分布式爬虫

- 什么是分布式爬虫 – 分布式爬虫就是多台计算机上都安装一样的爬虫程序,重点是协同采集。就是大家伙一起完成一件事。

准备工作

- 安装redis,百度上很多安装教程,这里不重复讲了

- pip install scrapy_redis

- 为了演示分布式的效果,准备多台服务器,或者开几个虚拟机,我这里开了一台Ubuntu

- 爬虫代码在文末

分析目标网站

- 京东图书全类目

- 思路 –1. 先以每个大分类为组,再取到小分类并获取其url链接,构造请求即可

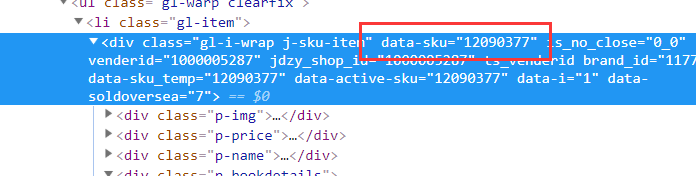

–2. 进入其中一个小分类分析页面 可以看到这是小分类下的书籍列表,通过抓包分析每本书的信息都在一个li标签中,点开li标签可以找到书名,图片,作者,出版社,出版时间等等信息

–2. 进入其中一个小分类分析页面 可以看到这是小分类下的书籍列表,通过抓包分析每本书的信息都在一个li标签中,点开li标签可以找到书名,图片,作者,出版社,出版时间等等信息

– 这时候会发现价格根本在网页源代码中找不到,这时我们回到抓包工具network的里按下ctrl+shift+搜索书的价格

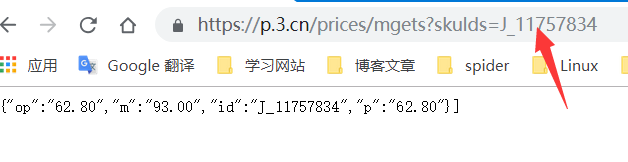

– 这时候会发现价格根本在网页源代码中找不到,这时我们回到抓包工具network的里按下ctrl+shift+搜索书的价格  这时我们可以发现它在https://p.3.cn/prices/mgets?callback=jQuery1091036&ext=11101000&pin=&type=1&area=1_72_4137_0&skuIds=J_12090377%2CJ_11757834%2CJ_10616501%2CJ_12192773%2CJ_12350509%2CJ_12155241%2CJ_10960247%2CJ_12479361%2CJ_12174897%2CJ_10162899%2CJ_12182317%2CJ_10367073%2CJ_11711801%2CJ_10019917%2CJ_11711801%2CJ_12018031%2CJ_12174895%2CJ_10199768%2CJ_11711801%2CJ_12114139%2CJ_12173835%2CJ_12160627%2CJ_11716978%2CJ_12406846%2CJ_12174923%2CJ_12052646%2CJ_11982184%2CJ_12271618%2CJ_12041776%2CJ_11982172&pdbp=0&pdtk=&pdpin=&pduid=15481474707961881713391&source=list_pc_front&_=1548843311943z

这时我们可以发现它在https://p.3.cn/prices/mgets?callback=jQuery1091036&ext=11101000&pin=&type=1&area=1_72_4137_0&skuIds=J_12090377%2CJ_11757834%2CJ_10616501%2CJ_12192773%2CJ_12350509%2CJ_12155241%2CJ_10960247%2CJ_12479361%2CJ_12174897%2CJ_10162899%2CJ_12182317%2CJ_10367073%2CJ_11711801%2CJ_10019917%2CJ_11711801%2CJ_12018031%2CJ_12174895%2CJ_10199768%2CJ_11711801%2CJ_12114139%2CJ_12173835%2CJ_12160627%2CJ_11716978%2CJ_12406846%2CJ_12174923%2CJ_12052646%2CJ_11982184%2CJ_12271618%2CJ_12041776%2CJ_11982172&pdbp=0&pdtk=&pdpin=&pduid=15481474707961881713391&source=list_pc_front&_=1548843311943z  这样一个地址,里面有很多条书的价格信息,它是一个json字符串,细心的你已经发现下面的规律,这样就是一本的信息,而那个skuIds不就是书的id吗

这样一个地址,里面有很多条书的价格信息,它是一个json字符串,细心的你已经发现下面的规律,这样就是一本的信息,而那个skuIds不就是书的id吗

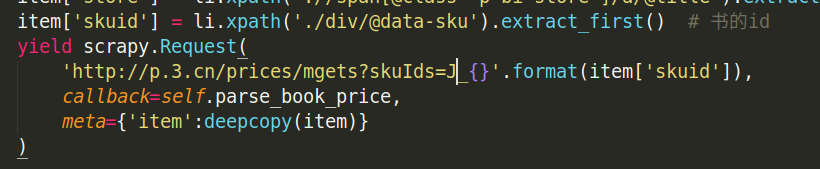

于是我们可以通过这个来构造每本书价格的url地址

于是我们可以通过这个来构造每本书价格的url地址

项目开始

- 先建个项目scrapy startproject book

- cd book

- scrapy genspider jd

为了能实现分布式的效果必须做一些修改

- 在setting里

那个IP地址把127.0.0.1换为本地IP,必须做这样,以便与另一台服务器连接

那个IP地址把127.0.0.1换为本地IP,必须做这样,以便与另一台服务器连接 - 在爬虫代码里,因为图书的价格的url的域名和书的是不同的,所以也要将它的域名放进allowed_domains中

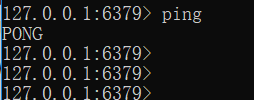

- 在windows中启动redis,打开cmd进入安装redis的文件夹执行redis-cli即可启动redis ping一下出现pong即是正常启动

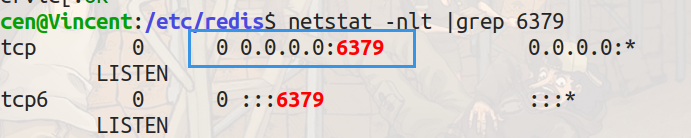

- 在Ubuntu中启动redis sudo /etc/init.d/redis-server start 同样的ping一下

- 接下来将项目book打包,传到另一台爬虫服务器上,如果是用虚拟机直接将打包文件拖拽进Ubuntu桌面就好了,然后解压(unzip book.zip)进入book文件夹下的spider文件夹,在当前目录下执行命令scrapy runspider

它在等待我们输入起始url,我们在刚刚windows里启动的redis 或者Ubuntu里redis命令行中输入命令lpush jd:start_urls 回车。 jd:start_urls是我们爬虫程序里的redis_key,其实redis_key随便写什么都可以。 这时Ubuntu里的爬虫已经开始爬取,然后我们再回到windows中,同样的再用cmd进入到book目录下的spider文件夹执行同样的命令scrapy runspider ,因为我们在另一台服务器上已经给了其实url,这里就不用再lpush了。

它在等待我们输入起始url,我们在刚刚windows里启动的redis 或者Ubuntu里redis命令行中输入命令lpush jd:start_urls 回车。 jd:start_urls是我们爬虫程序里的redis_key,其实redis_key随便写什么都可以。 这时Ubuntu里的爬虫已经开始爬取,然后我们再回到windows中,同样的再用cmd进入到book目录下的spider文件夹执行同样的命令scrapy runspider ,因为我们在另一台服务器上已经给了其实url,这里就不用再lpush了。

效果

这时两台服务器已经在同时爬取信息了。(文件上传收限制,只能一小点动图了)

redis远程连接不成功解决方法

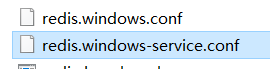

- win上 在安装redis的文件夹下找到这两个文件夹,将bind 127.0.0.1注释掉,再在里面找到protected-mode yes 改为protected-mode no

重启redis,不会重启可以搜索服务,找到redis右键选择重新启动

重启redis,不会重启可以搜索服务,找到redis右键选择重新启动

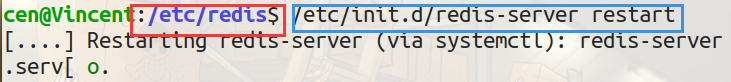

- ubuntu上 sudo vi /etc/redis/redis.conf 将127.0.0.1注释起来 重启redis服务,注意我已经进入到redis的目录下了,如果你没有进到那么就要把路径都打出来

这样Ubuntu就能正常被远程访问了

这样Ubuntu就能正常被远程访问了

爬虫完整代码

# -*- coding: utf-8 -*-from scrapy_redis.spiders import RedisSpiderimport scrapyimport jsonimport urllibfrom copy import deepcopy# class BookSpider(scrapy.Spider):class BookSpider(RedisSpider): name = 'jd' allowed_domains = ['jd.com','p.3.cn'] # start_urls = ['https://book.jd.com/booksort.html'] redis_key = 'jd:start_urls' def parse(self, response): item = {} # 大分类 dt_list = response.xpath('//div[@class="mc"]/dl/dt') for dt in dt_list: item['b_cate'] = dt.xpath('./a/text()').extract_first() # 大分类title em_list = dt.xpath('./following-sibling::dd[1]/em') # 小分类 for em in em_list: item['s_cate'] = em.xpath('./a/text()').extract_first() item['s_href'] = em.xpath('./a/@href').extract_first() if item['s_href'] is not None: item['s_href'] = 'https:' + item['s_href'] # 构造请求 yield scrapy.Request( item['s_href'], callback=self.parse_book_list, meta={'item':deepcopy(item)} # 深拷贝,给item一个空间,防止数据重复 ) # 解析书列表页 def parse_book_list(self, response): item = response.meta['item'] li_list = response.xpath('//div[@id="plist"]/ul/li') for li in li_list: item['book_name'] = li.xpath('.//div[@class="p-name"]/a/em/text()').extract_first().strip() item['book_href'] = 'https:'+li.xpath('.//div[@class="p-img"]/a/@href').extract_first() item['book_img'] = li.xpath('.//div[@class="p-img"]/a/img/@src').extract_first() if item['book_img'] is None: item['book_img'] = li.xpath('.//div[@class="p-img"]/a/img/@data-lazy-img').extract_first() item['book_img'] = 'https:' + item['book_img'] if item['book_img'] is not None else None item['authors'] = li.xpath('.//span[@class="p-bi-name"]/span/a//text()').extract() item['publish_date'] = li.xpath('.//span[@class="p-bi-date"]/text()').extract_first().strip() item['store'] = li.xpath('.//span[@class="p-bi-store"]/a/@title').extract_first() item['skuid'] = li.xpath('./div/@data-sku').extract_first() yield scrapy.Request( 'http://p.3.cn/prices/mgets?skuIds=J_{}'.format(item['skuid']), # 价格的响应地址 callback=self.parse_book_price, meta={'item':deepcopy(item)} ) # 寻找下一页url地址 next_url = response.xpath('//a[@class="pn-next"]/@href').extract_first() if next_url is not None: next_url = urllib.parse.urljoin(response.url, next_url) # 构造下一页url的请求 yield scrapy.Request( next_url, callback=self.parse_book_list, meta={'item':item} ) # 获取价格 def parse_book_price(self, response): item = response.meta['item'] item['price'] = json.loads(response.body.decode())[0]['op'] print(item) # 这里我没有保存到item里去,只是打印下查看效果,保存工作自己来完成吧 # yield item 喜欢就点个赞吧 ?

转载地址:http://nzhgn.baihongyu.com/

你可能感兴趣的文章

Projective Dynamics: Fusing Constraint Projections for Fast Simulation

查看>>

从2D恢复出3D的数据

查看>>

glm 中 数据类型 与 原始数据(c++ 数组)之间的转换

查看>>

Derivatives of scalars, vector functions and matrices

查看>>

the jacobian matrix and the gradient matrix

查看>>

VS2010 将背景设为保护色

查看>>

ubutun里面用命令行安装软件

查看>>

ubuntu 常用命令

查看>>

qt pro 里面变量的引用

查看>>

QT+CUDA7.5+UBUNTU14.04

查看>>

SQLite Tutorial 4 : How to export SQLite file into CSV or Excel file

查看>>

SQLite Tutorial 5 : How to Import CSV or Excel file into SQLite database

查看>>

mel加载一个物体不同姿态的模型实现动画效果

查看>>

MAYA中average normal

查看>>

Lesson5 一阶自治微分方程

查看>>

SOFA的安装

查看>>

如何安装boost库

查看>>

彻底解决 LINK : fatal error LNK1123: 转换到 COFF 期间失败: 文件无效或损坏

查看>>

GL中如何让画的点为圆形

查看>>

普通指针到智能指针的转换

查看>>